The gap between an AI demo and a production AI system comes down to one word: guardrails. Every team deploying LLMs at scale has learned this — often through expensive, embarrassing failures. Raw model intelligence does not buy you reliability. An analysis of 1,200 production LLM deployments confirmed what practitioners already suspected: the most successful organizations engineer the system around the model instead of waiting for the model to get smarter. Meanwhile, 68% of organizations running LLMs without adequate guardrails reported security incidents in 2024, and Forrester estimates global financial losses from hallucinated AI outputs hit $67.4 billion that same year. The teams shipping dependable AI treat guardrails as infrastructure — not afterthoughts.

What does that infrastructure look like? Four engineering pillars keep showing up across production deployments: confidence thresholds that know when to defer, output validation that enforces correctness, human-in-the-loop escalation that catches what automation misses, and audit trails that make every decision traceable. Here is how teams wire them together — and what goes wrong when they skip a layer.

Confidence scores determine what gets automated and what doesn't

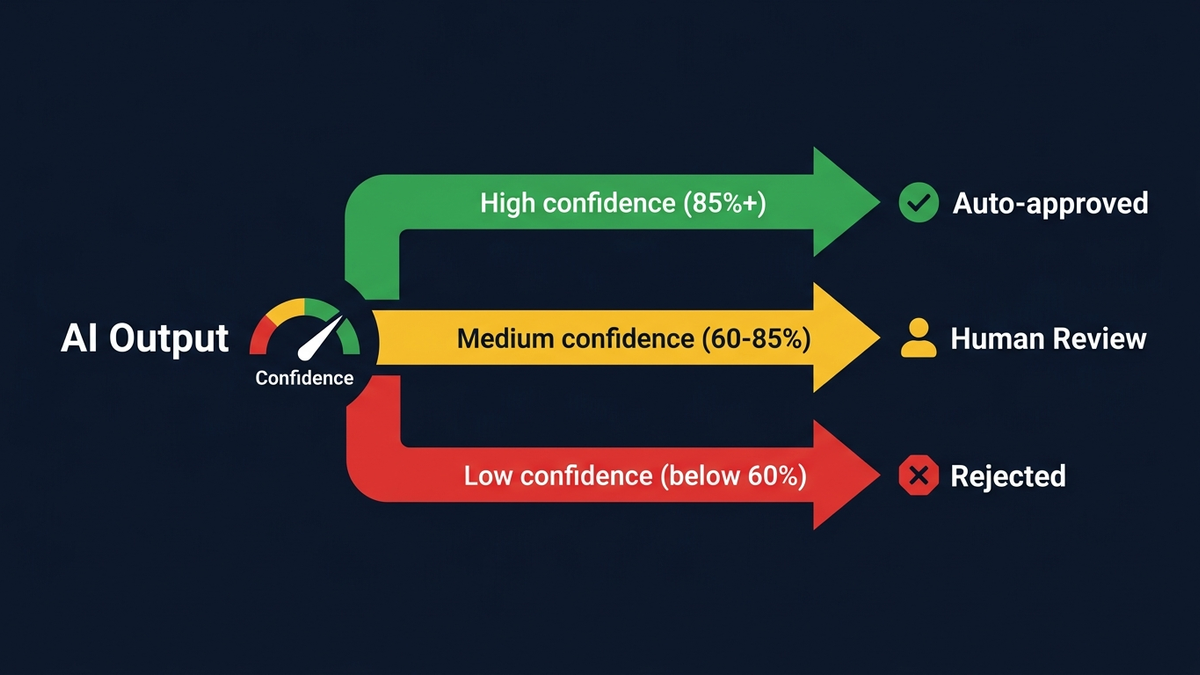

Every production AI decision starts with a question: how certain is the model? Confidence thresholds translate that certainty into action. The standard architecture uses three tiers. Outputs above 0.85–0.95 confidence get auto-approved. Scores between 0.60 and 0.85 route to human review queues. Anything below 0.60 gets rejected or triggers full escalation. Not arbitrary cutoffs — these numbers are calibrated against domain-specific risk tolerances and adjusted continuously.

For LLM-based systems, confidence typically derives from log probabilities (logprobs), which OpenAI exposes through its Chat Completions API. A logprob of -0.693 translates to roughly 50% probability for a given token. Engineers aggregate these across output tokens using linear probability averaging or perplexity scores. For classification tasks, the logprob of the predicted class token serves directly as the confidence score. In RAG systems, a common pattern has the model output a has_sufficient_context_for_answer boolean — the logprob on that token becomes the retrieval quality signal.

But raw logprobs lie. A model reporting 90% confidence might actually be correct only 70% of the time — the “confident hallucination” problem that makes naive threshold-based routing dangerous. Post-hoc calibration techniques like temperature scaling, Platt scaling, and isotonic regression close this gap by mapping raw scores to true probabilities. The Expected Calibration Error (ECE) metric measures how well this works: a well-calibrated model claiming 80% confidence should be right roughly 80% of the time.

Smart practitioners treat thresholds as dynamic business controls, not static config. Rossum, a document AI company, sets its default automation threshold at 0.975 — tolerating only a 2.5% maximum error rate for auto-processed invoices. More nuanced systems vary thresholds by field: invoice numbers demand near-perfect accuracy while vendor names tolerate more flexibility. Some organizations shift thresholds by context — aggressive (0.70) during normal business hours for throughput, conservative (0.90) during month-end close for accuracy. A surprisingly pragmatic approach for a field that loves to over-engineer things.

Then there is abstention — teaching models to say “I don't know.” A 2025 survey in Transactions of the ACL organized abstention strategies across prompting-based approaches, fine-tuning methods like R-tuning, and reinforcement learning with proper scoring rules. Yet AbstentionBench, a benchmark 35 times larger than prior efforts, revealed a sobering truth: even GPT-4 and advanced reasoning models struggle to reliably recognize unanswerable queries. No correlation exists between a model's answer accuracy and its ability to abstain — they are orthogonal capabilities that must be engineered separately. Seriously: your smartest model might also be your most recklessly overconfident one.

Output validation catches what confidence scoring misses

Confidence scores tell you how certain the model is. Output validation tells you whether what it produced is actually correct. These are different problems, and production systems layer multiple validation strategies to address both — from structural enforcement to semantic verification.

Structured output enforcement has come a long way. Structured outputs from providers like OpenAI (launched August 2024) achieve 100% JSON schema compliance through constrained decoding — the system compiles a JSON schema into a grammar that restricts token generation at inference time. Before this? Even GPT-4 produced valid JSON less than 40% of the time on complex schemas. Anthropic followed with its own structured outputs beta in November 2025, offering both JSON mode and strict tool use with constrained decoding on Claude models. Cross-provider libraries like Instructor and LiteLLM now abstract these behind unified Pydantic-based interfaces, making schema enforcement provider-agnostic.

Beyond structural correctness, content validation demands a layered approach. The production pattern that has emerged across the industry follows a five-stage pipeline:

- Input validation catches prompt injection attacks, detects PII, and enforces topic boundaries.

- Retrieval validation (in RAG systems) filters irrelevant chunks and masks sensitive data before they reach the model.

- Generation constraints apply structured outputs and temperature controls.

- Output validation checks for toxicity, hallucination, and format compliance.

- Monitoring tracks confidence drift and feeds corrections back into the system.

Three open-source guardrails frameworks dominate this space — and each has a distinct personality. NVIDIA's NeMo Guardrails uses Colang, a domain-specific language for defining conversational flows and safety rails. It supports input, output, dialog, retrieval, and execution rails with GPU-accelerated detection achieving sub-second latency across five parallel guardrails. Guardrails AI takes a different angle — composable output validators through its Hub, with pre-built checks for regex matching, length validation, PII detection, and toxicity scoring that wrap LLM calls and validate outputs automatically. And Meta's Llama Guard 3 provides multimodal safety classification across 14 harm categories, deployable on-premise for data-sensitive environments. For agent-specific security, Meta's LlamaFirewall (May 2025) uses ML-based classifiers for prompt injection detection, alignment checking, and code safety analysis, achieving over 90% reduction in attack success rates on agent benchmarks.

Hallucination detection remains the hardest validation problem. The best models now achieve sub-1% hallucination rates on summarization benchmarks — Google's Gemini-2.0-Flash leads at 0.7% — but general knowledge hallucination rates still average around 9.2%. That gap matters. RAG reduces hallucinations by 40–71%, yet researchers have proven mathematically that eliminating hallucinations entirely is impossible given current LLM architectures. So production systems stack multiple detection methods: Chain-of-Verification pipelines that generate and independently answer verification questions (reducing hallucinations by up to 53%), semantic entropy that clusters differently-worded answers to measure meaning-level uncertainty, and cross-layer attention probing that flags hallucinations in real-time using lightweight classifiers on model activations.

When AI should hand off to humans — and how to make the handoff clean

The sweet spot for human-in-the-loop escalation in production sits at 10–15% of total decisions. Below that, you are probably rubber-stamping tasks that deserve human eyes. Above 20%, you have a bottleneck. Above 60%, your system needs fundamental recalibration.

Escalation triggers fall into three categories. Confidence-based triggers route low-scoring outputs to review queues — straightforward. Rule-based triggers always escalate specific conditions: financial amounts above a threshold, actions affecting multiple users, first-time task types, or outputs contradicting previous decisions for the same user. Risk-matrix triggers are the most sophisticated, evaluating four dimensions simultaneously: irreversibility of the action, blast radius of a potential error, compliance exposure, and model confidence. The combination determines whether human review is needed. No single factor alone.

But the handoff architecture matters just as much as what triggers it. Three patterns dominate production:

- Pre-action approval pauses execution before irreversible actions and surfaces the proposed action with reasoning for human decision.

- Post-action audit lets AI act immediately on reversible decisions while humans sample and review afterward.

- Confidence-based routing automates the decision between these two modes.

LangGraph's interrupt() function has become a popular implementation choice — it pauses graph execution mid-workflow, waits for human input, and resumes cleanly. For asynchronous workflows, tools like HumanLayer route decisions to Slack channels, email, or dashboards for non-blocking review.

The real payoff comes from the feedback loop. When reviewers consistently downgrade outputs for the same reason, it triggers prompt revision or preprocessing changes. When a task class always requires intervention, it signals the task should not be automated yet. AWS demonstrated an 80% reduction in subject matter expert workload by combining RLHF with RLAIF — AI generates initial evaluations, humans verify rather than create from scratch. Cursor processes 400 million requests daily for its Tab feature and runs an online RL pipeline that updates based on user acceptance rates within hours, producing a 28% increase in code acceptance.

Domain-specific patterns that work in practice

Real-world HITL implementations reveal sharp domain-specific patterns. In financial services, Ramp's policy agent handles over 65% of expense approvals autonomously, but every new capability goes through shadow mode testing first — the agent predicts actions while an LLM judge compares predictions against actual human decisions, only going live after hitting accuracy thresholds. No real money at risk during training. That discipline is rare.

In healthcare, AI-assisted radiology readers improved their diagnostic accuracy from kappa scores of 0.6 to 0.9, matching specialist radiologists. But here is the uncomfortable finding: endoscopists using AI for three months saw detection rates decline after the AI was turned off. Deskilling risk is real, and nobody has a great answer for it yet.

In legal tech, AI reviews contracts 80% faster than humans with 94% accuracy. Sounds great — until you learn that only 68% of GPT-4's contract-related responses were deemed “practically viable” by legal experts. Human oversight is non-negotiable for consequential legal work.

Audit trails are not optional — they are legally mandated

If you think audit logging is a nice-to-have, the EU disagrees. The EU AI Act's Article 12 requires high-risk AI systems to technically allow automatic recording of events over the lifetime of the system, with tamper-resistant logs retained for a minimum of six months. Article 26 mandates that deployers assign human oversight to competent persons and monitor system operation. Penalties reach €35 million or 7% of global turnover. And these are not future requirements — governance rules and general-purpose AI obligations became applicable in August 2025, with high-risk system rules taking full effect by August 2026.

GDPR adds another layer. Article 22 gives data subjects the right not to be subject to decisions based solely on automated processing that produces legal or similarly significant effects. Any AI system making consequential decisions about individuals — loan approvals, hiring, insurance pricing — must provide human intervention mechanisms, explanations of the logic involved, and the ability for individuals to contest decisions. No audit trail, no compliance. No compliance, no deployment.

The good news: observability tooling has caught up. OpenTelemetry's GenAI Semantic Conventions (v1.37+) have become the industry standard for LLM telemetry, defining common attributes like gen_ai.request.model, gen_ai.usage.input_tokens, and gen_ai.provider.name across vendors. Datadog's LLM Observability natively supports OTel GenAI conventions, correlating LLM spans with traditional APM traces. Arize AI's open-source Phoenix uses the OpenInference specification built on OpenTelemetry. LangSmith captures full execution trees — tool selections, retrieved documents, exact parameters at every step — with annotation queues that let domain experts review and label traces.

What do production teams actually log per inference call? More than you might expect:

- Full input/output content (or cryptographic hashes for sensitive data)

- Model name and version

- Prompt template ID and version

- Token counts broken down by input, output, cached, and reasoning tokens

- Calculated cost and latency metrics (total duration, time to first token, LLM processing time)

- Temperature and sampling parameters

- Finish reason

- Trace and span IDs for distributed tracing

- Business metadata like user ID, environment, A/B test group, and feature flags

Prompt versioning has become a first-class engineering discipline. Best practice follows semantic versioning (major.minor.patch), with immutable versions — once created, a prompt version is never modified. Changes create new versions deployed through environment-based promotion (dev → staging → production), with every production trace linked to the exact prompt version used. Tools like LangSmith, Langfuse, Braintrust, and Helicone all support this natively. ISO 42001, the world's first certifiable AI management system standard, has been adopted by Microsoft, Google Cloud, and AWS — establishing baseline governance expectations for enterprise AI.

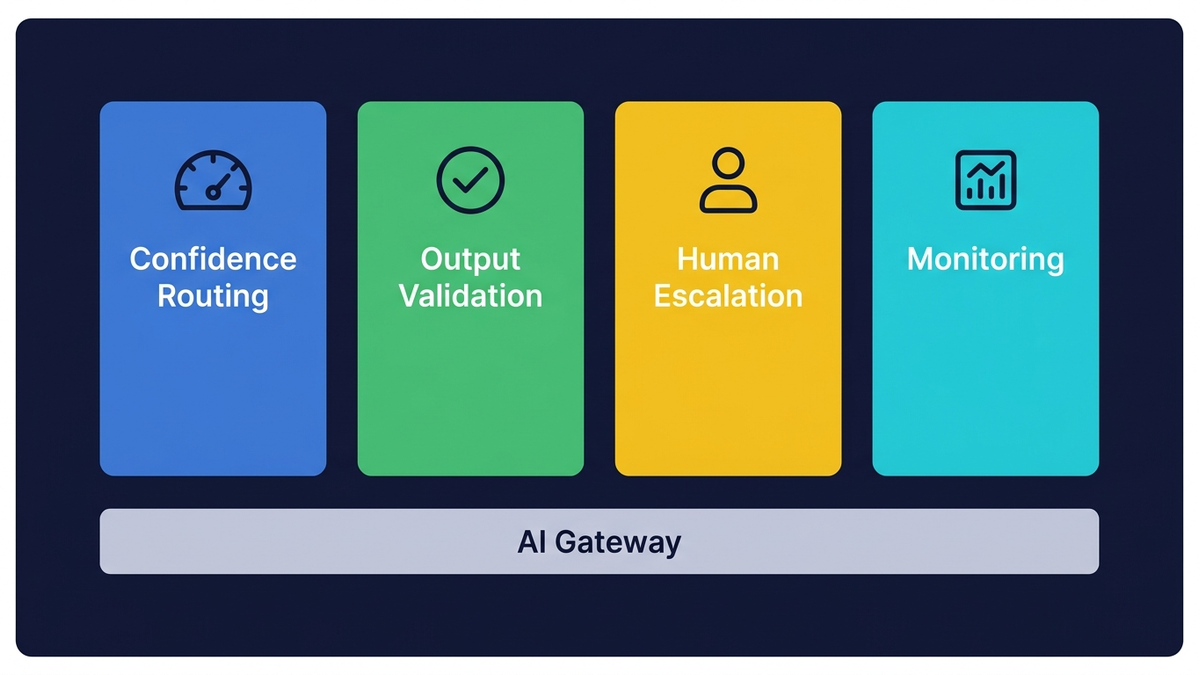

How the four pillars connect into a unified reliability layer

None of these pillars work in isolation. The reference architecture emerging across production deployments stacks them into a layered system:

- The application layer handles feature flags, canary routing, and A/B testing for prompt changes.

- The AI gateway layer — implemented through tools like Portkey (processing 10 billion+ requests monthly), LiteLLM, or TensorZero — manages multi-provider routing, rate limiting, caching, circuit breakers, and observability.

- The guardrails layer enforces input and output validation through NeMo Guardrails, Guardrails AI, Llama Guard, or cloud-native options like AWS Bedrock Guardrails.

- The evaluation layer runs CI/CD checks through DeepEval and Promptfoo, red-team scans pre-deployment, and continuous production monitoring.

Circuit breakers adapted for AI add a critical resilience pattern. Unlike traditional circuit breakers that trip on HTTP errors, AI circuit breakers must also track quality failures— if an LLM returns malformed JSON or hallucinated data three times consecutively, the circuit trips even if API calls technically “succeeded.” Cox Automotive implements hard limits: conversations exceeding 20 turns or costs hitting P95 thresholds trigger automatic graceful handoff to human agents.

The December 2024 multi-provider outage proved why this matters. OpenAI went dark for four hours while Claude and Gemini degraded simultaneously. The teams that survived had graceful degradation chains — a five-level hierarchy: primary model to cheaper/faster model to cached responses to rule-based heuristics to human escalation. An airline's scheduling system survived a cloud outage by switching to a heuristic optimizer (valid in 90% of cases), then a rule engine for simple constraints, flagging the remaining 5% for manual review — zero flight cancellations. That is the kind of resilience engineering that separates production systems from science projects.

Prompt changes deserve the same deployment caution as code changes — arguably more, because their effects are harder to predict. New prompt versions receive 1–5% of production traffic while quality metrics — not just error rates — are tracked. Prompt changes can have subtle quality impacts invisible in system health metrics, requiring longer observation windows for statistical significance. Only after quality confirmation does traffic gradually ramp to 100%.

Lessons from the teams getting this right

The clearest lesson from production: the bottleneck is engineering, not intelligence. The teams shipping reliable LLM systems look like teams shipping any other critical infrastructure — disciplined about failure modes, rigorous about evaluation, pragmatic about which components need to be bulletproof. Ramp shadow-tests every new AI capability against real financial transactions without risking a dollar. Stripe built a domain-specific foundation model that improved card-testing fraud detection from 59% to 97% accuracy while recovering $6 billion in legitimate payments. Shopify serves 30 million predictions daily across 10,000+ product categories with an 85% merchant acceptance rate.

And yet — Gartner predicts 40% of agentic AI projects will be canceled by 2027 due to escalating costs, unclear business value, or inadequate risk controls. Forty percent. The survivors will be the teams that treated guardrails as core infrastructure from day one — moving safety logic out of prompts and into code, where architectural constraints provide guarantees that prompt engineering never could.

The guardrails do not slow you down — they make it safe to move fast

Production AI reliability is converging on a clear architectural pattern: confidence-aware routing, layered validation, structured human escalation, and comprehensive observability — all orchestrated through AI gateways that serve as the central control plane. The defining shift of 2024–2025 has been the systematic movement of safety logic from prompts into infrastructure.

Organizations with mature governance frameworks achieve 3x faster time-to-productionfor new AI features, not despite the guardrails but because of them. That finding alone should end every “guardrails slow us down” argument in every planning meeting, forever. The engineering is the product. The guardrails are the feature.